|

| 1 | +# Vibe RL Example: Building a "Who is the Spy" Agent Trainer from Scratch Without Writing a Single Line of Code |

| 2 | + |

| 3 | +> This article is a translated version of the [Chinese original](./example_vibe_rl_who_is_spy.zh.md). |

| 4 | +

|

| 5 | +## Abstract |

| 6 | + |

| 7 | +In reinforcement learning research, the journey from inspiration to writing code to generating the first successful training curve is long and tedious. Fortunately, with the AgentJet framework, going from idea to successful training is now just a matter of speaking up and spending a few minutes writing some prompts. After a short wait, you get to see **complete, concise, human-readable and editable training code** alongside **the first training curve** displayed before you. In this article, we use the classic "Who is the Spy" board game as an example to demonstrate the entire process of training an Agent without writing code. |

| 8 | + |

| 9 | +## Install AgentJet Environment |

| 10 | + |

| 11 | +You can choose to [install manually](https://doc.agentjet.top/en/installation/) or use skills. Run the following commands to copy skills into Claude Code or OpenCode: |

| 12 | + |

| 13 | +```bash |

| 14 | +npx skills add modelscope/agentjet |

| 15 | +npx skills add binary-husky/Vibe-RL |

| 16 | +``` |

| 17 | + |

| 18 | +After the skills are added, you can instruct Claude Code or OpenCode to install AgentJet using uv (or conda / docker). |

| 19 | + |

| 20 | +## Write the Prompt |

| 21 | + |

| 22 | +Once AgentJet is installed, you can get started right away. Open OpenCode (while ClaudeCode is more powerful, the author prefers fully open-source tools; moreover, Vibe RL difficulty in AgentJet is quite low, so we don't need a very strong agent), then select the claude-4.5-sonnet model (this model is faster than opus for reasoning speed and sufficient for tasks that aren't too difficult), and start executing the task: |

| 23 | + |

| 24 | +```txt |

| 25 | +Your task: |

| 26 | +- Write an agent that learns the "Who is the Spy" task, trained using a combination of reinforcement learning and supervised learning. The game rules are as follows: |

| 27 | + - The game has N players, most of whom are **civilians**, with a few being **spies** |

| 28 | + - At the start of the game, each civilian receives the same **civilian word**, and each spy receives a **spy word** that is similar to the civilian word but different (e.g., civilian word is "apple", spy word is "pear") |

| 29 | + - In each round, all players take turns giving **verbal descriptions** of their word. The description must truthfully reflect the word, but cannot directly say the word itself or expose the player's identity too obviously |

| 30 | + - After all players have described, the game enters the **voting phase**, where all players vote for who they think is the most suspicious spy. The player with the most votes is eliminated |

| 31 | + - The game continues for multiple rounds until one of the following end conditions is met: |

| 32 | + - **Civilians win**: All spies are eliminated |

| 33 | + - **Spies win**: The number of spies >= the number of civilians (spies have the numerical advantage) |

| 34 | + - The agent needs to master two core abilities through extensive gameplay: |

| 35 | + - **Description strategy learning**: Learn to generate optimal descriptions based on the agent's word and current game state that neither expose identity nor alienate teammates |

| 36 | + - **Reasoning and decision learning**: Learn to accurately identify spies based on conversation history, other players' description patterns, and behavioral characteristics, and make optimal voting decisions |

| 37 | + - Training objective: Maximize the agent's win rate across different roles (civilian/spy), continuously optimizing strategy through self-play and reward mechanisms |

| 38 | +- I want to use the base model `/mnt/data_cpfs/model_cache/modelscope/hub/Qwen/Qwen/Qwen2.5-7B-Instruct` |

| 39 | +- Use 8 GPUs for training |

| 40 | +- Batch Size 16 |

| 41 | +- I don't have a dataset yet, please help me mock some game data for testing and initial training |

| 42 | +- Use OpenAI SDK, flexibly use Tools |

| 43 | +- Code must not contain Chinese characters |

| 44 | +

|

| 45 | +Your skill (please read this SKILL file first to get necessary knowledge): |

| 46 | +./ajet/copilot/write-swarm-client/SKILL.md |

| 47 | +

|

| 48 | +- Additional requirements: |

| 49 | + - optional 0. (agent_roll) Team A civilians share one 7B model, Team B spies use qwen-max (DASHSCOPE_API_KEY is already in environment variables), |

| 50 | + each episode randomly assigns each player's ID and name (randomly generate a long list of random names), winner gets reward 1, loser gets reward 0 |

| 51 | + - optional 1. (agent_roll_adv) Adversarial training, Team A civilians share one 7B model (swarm server 1), Team B spies share another 7B model (swarm server 2), |

| 52 | + each episode randomly assigns each player's ID and name (randomly generate a long list of random names), winner gets reward 1, loser gets reward 0 |

| 53 | +

|

| 54 | +- Additional requirements: |

| 55 | + agent_roll: Use 4 GPUs |

| 56 | + agent_roll_adv: swarm server 1 and swarm server 2 each use 4 GPUs (total 8 GPUs) |

| 57 | +

|

| 58 | +- Additional requirements: Use tmux + uv's .venv for debugging until all bugs are fixed & training starts normally. You can use `spy-swarm-server`, `spy-swarm-server-2`, `spy-swarm-client` three tmux sessions |

| 59 | +

|

| 60 | + - Current debugging stage: |

| 61 | + Debugging agent_roll [Execute debugging] |

| 62 | + Debugging agent_roll_adv [Skip debugging] |

| 63 | +``` |

| 64 | + |

| 65 | +## Check Results |

| 66 | + |

| 67 | +### Generated Training Code |

| 68 | + |

| 69 | +Under the guidance of the agentjet skill, OpenCode generates all training code in `tutorial/opencode_build_***`: |

| 70 | + |

| 71 | +```bash |

| 72 | +(base) ➜ agentjet git:(main) ✗ tree tutorial/opencode_build_spy_game |

| 73 | +tutorial/opencode_build_spy_game/ |

| 74 | +├── mock_dataset.py # Generate mock game configurations |

| 75 | +├── mock_game_dataset.json # 200 game scenarios with word pairs |

| 76 | +├── game_engine.py # Core game mechanics and player logic |

| 77 | +├── agent_run.py # Agent executor for agent_roll mode |

| 78 | +├── agent_roll.py # Training script for agent_roll mode |

| 79 | +├── agent_run_adv.py # Agent executor for adversarial mode |

| 80 | +├── agent_roll_adv.py # Training script for adversarial mode |

| 81 | +└── readme.md # This file |

| 82 | +``` |

| 83 | + |

| 84 | +### Inspect the Training Swarm, Find and Fix Agent Training Bugs |

| 85 | + |

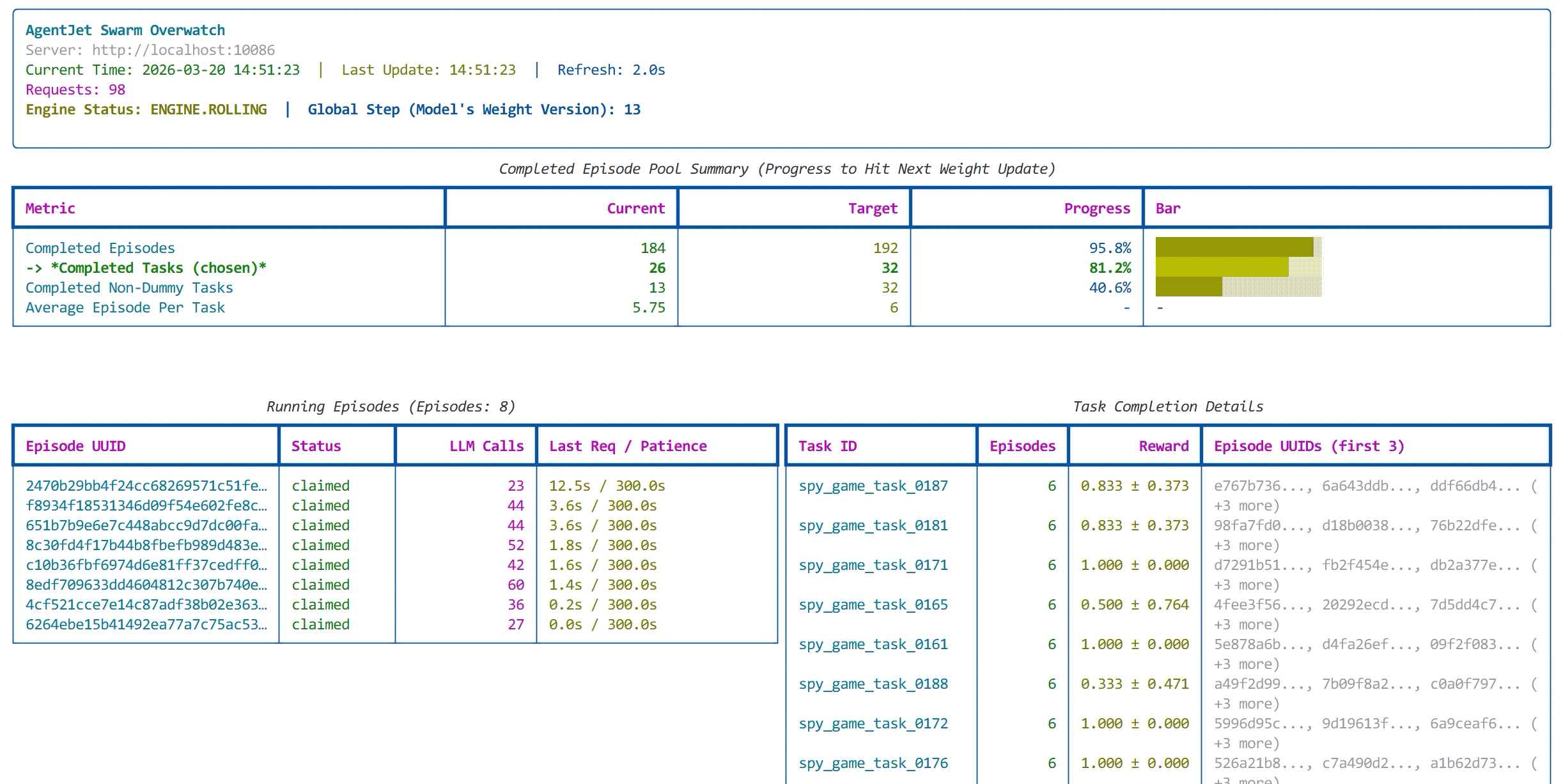

| 86 | +After waiting a while, running the `ajet-swarm overwatch` command shows the current training progress: |

| 87 | + |

| 88 | +```bash |

| 89 | + Completed Episode Pool Summary (Progress to Hit Next Weight Update) |

| 90 | +┏━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━┳━━━━━━━━━━━━━┳━━━━━━━━━━━━━┳━━━━━━━━━━━━━━┳━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━┓ |

| 91 | +┃ Metric ┃ Current ┃ Target ┃ Progress ┃ Bar ┃ |

| 92 | +┡━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━╇━━━━━━━━━━━━━╇━━━━━━━━━━━━━╇━━━━━━━━━━━━━━╇━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━┩ |

| 93 | +│ Completed Episodes │ 140 │ 16 │ 875.0% │ █████████████████████████████████████████████████████████████████████ │ |

| 94 | +│ │ │ │ │ █████████████████████████████████████████████████████████████████████ │ |

| 95 | +│ │ │ │ │ █████████████████████████████████████ │ |

| 96 | +│ -> *Completed Tasks (chosen)* │ 1 │ 4 │ 25.0% │ █████░░░░░░░░░░░░░░░ │ |

| 97 | +│ Completed Non-Dummy Tasks │ 1 │ 4 │ 25.0% │ █████░░░░░░░░░░░░░░░ │ |

| 98 | +│ Average Episode Per Task │ 140.00 │ 4 │ - │ - │ |

| 99 | +└────────────────────────────────────────┴─────────────┴─────────────┴──────────────┴───────────────────────────────────────────────────────────────────────┘ |

| 100 | + |

| 101 | + Task Completion Details |

| 102 | +┏━━━━━━━━━━━━━━┳━━━━━━━━━━━━━━━┳━━━━━━━━━━━━━━━━━━━━━━━┳━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━┓ |

| 103 | +┃ Task ID ┃ Episodes ┃ Reward ┃ Episode UUIDs (first 3) ┃ |

| 104 | +┡━━━━━━━━━━━━━━╇━━━━━━━━━━━━━━━╇━━━━━━━━━━━━━━━━━━━━━━━╇━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━┩ |

| 105 | +│ │ 140 │ 0.779 ± 0.448 │ b47d7b96..., 8caec2d7..., b48bd9fb... (+137 more) │ |

| 106 | +└──────────────┴───────────────┴───────────────────────┴───────────────────────────────────────────────────────────────────────────┘ |

| 107 | +``` |

| 108 | + |

| 109 | +From the swarm monitoring table, the sample pool has accumulated 875.0% (140) episode samples, but AgentJet hasn't started training yet. Looking closer, the Completed Tasks progress is only 1, meaning all 140 episodes were identified as one task. The task IDs for these samples? They're empty strings. No doubt, claude-sonnet produced a hilarious bug in the mock dataset. We give OpenCode a new directive: |

| 110 | + |

| 111 | +```txt |

| 112 | +task.task_id has a serious problem - task_id should be a random seed for each episode and must not be empty! |

| 113 | +``` |

| 114 | + |

| 115 | +While we're at it, we adjust some parameters: batch size from 4 to 32, grpo_n from 4 to 6. Then we have a cup of tea and come back. This time it works. |

| 116 | + |

| 117 | + |

| 118 | + |

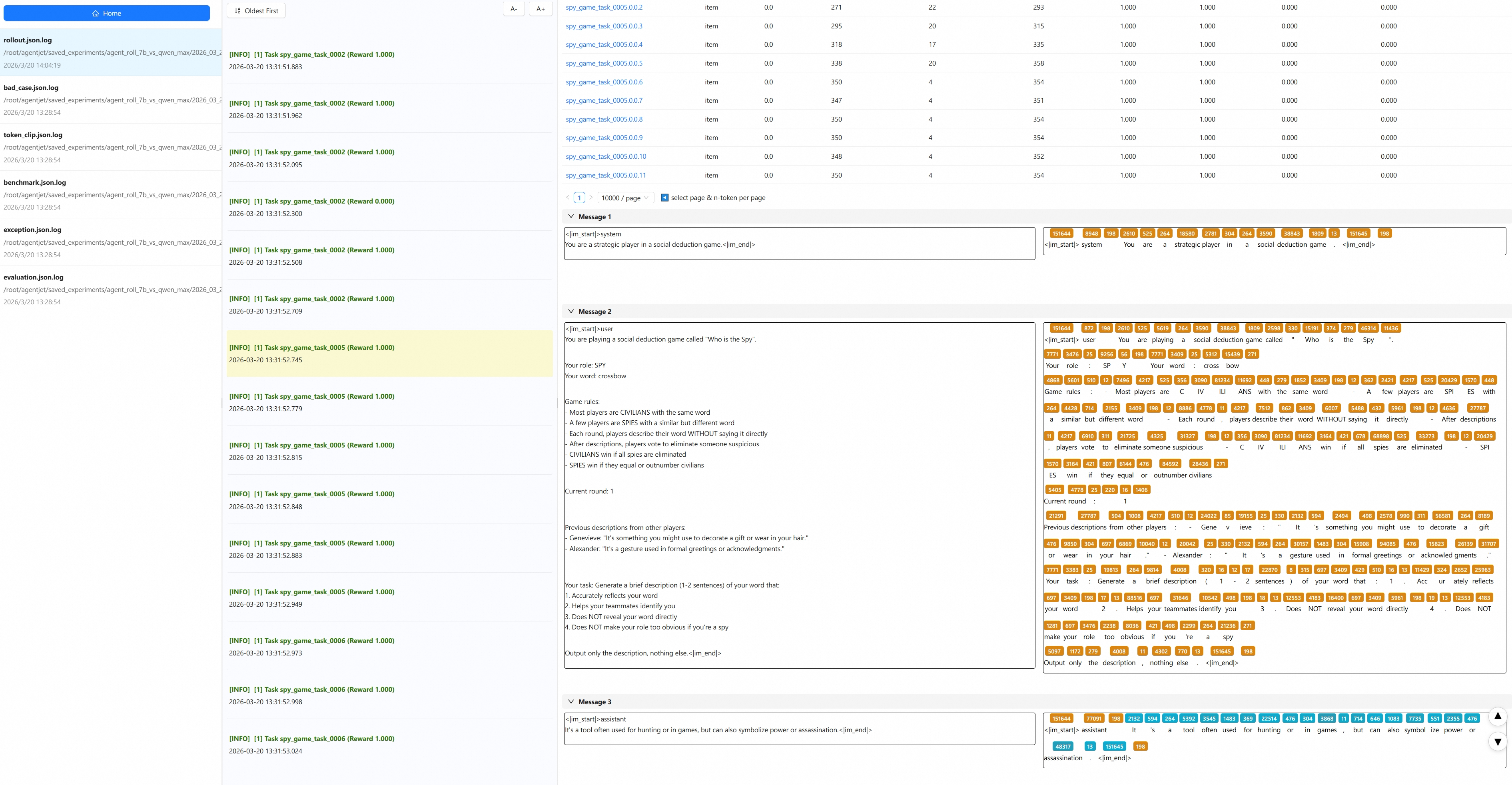

| 119 | +To ensure the agent logic is correct, we also open beast_logger (the log monitoring component that comes with agentjet): |

| 120 | + |

| 121 | + |

| 122 | + |

| 123 | +One look and sure enough, there are still issues (slightly regretting not using opus). Our requirement was that Team A civilians share one brain with a 7B model, while Team B spies use qwen-max. But why did a spy sneak into the civilian team? This time we need claude-sonnet to reflect carefully: |

| 124 | + |

| 125 | + |

| 126 | + |

| 127 | +After a while, we check again and the issues are all fixed. |

| 128 | + |

| 129 | +### Check Training Curves |

| 130 | + |

| 131 | +Heading over to SwanLab — not bad, the reward is steadily climbing. |

| 132 | + |

| 133 | + |

0 commit comments